Video generation with AI is no longer an experimental novelty — it’s a practical set of tools that creators, marketers, educators, and product teams can use today. This guide explains the main approaches (text-to-video, reference-to-video, image-to-video, frames-to-video), how they differ, when to use each, and how to build reliable workflows. I’ll also point to a practical tool that bundles capabilities and templates to help you move from idea to final video faster.

What Each Approach Does (and When to Use It)

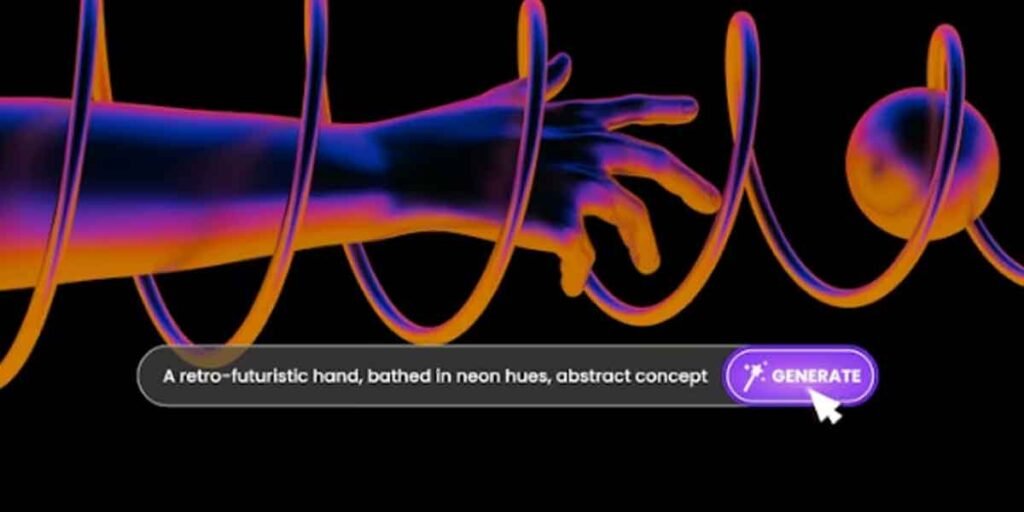

Text-to-Video

What it is: Generate video directly from a text prompt (story, scene description, camera directions).

When to use it: Fast ideation, proof-of-concept, short social clips, and creative explorations where precise visuals aren’t required.

Strengths: Easy to start (no assets needed), good for novel or abstract visuals.

Limitations: Temporal consistency and fine control over character appearance or exact framing can be hit-or-miss; often needs iteration and post-editing.

Reference-to-Video

What it is: Use one or more reference inputs (images, sample clips, color palettes, or brief motion cues) plus optional text to guide the generated video.

When to use it: You need consistent style, character likeness, or brand fidelity across shots—e.g., animate a character from portfolio images, restyle footage to match a brand frame.

Strengths: Greater control over look and continuity, easier to match branding or character design.

Limitations: Quality depends on reference quality and model’s temporal conditioning; may require multiple passes.

Image-to-Video

What it is: Animate a still image into motion—parallax, head turns, windswept hair, or subtle camera moves.

When to use it: Bring portraits, product photos, or concept art to life without full animation pipelines.

Strengths: Fast and cost-effective for short, high-impact clips.

Limitations: Often optimized for short durations and subtle motion.

Frames-to-Video (Keyframes/Storyboard Driven)

What it is: Provide a sequence of frames, sketches, or key poses; the model generates the motion between them.

When to use it: You have clear blocking or a storyboard and want smooth interpolation to produce a continuous shot.

Strengths: Excellent for choreographed scenes and predictable motion.

Limitations: Requires careful frame planning; longer sequences may need shot-splitting.

Practical Workflow for Reliable Results

- Start with a brief: duration, aspect ratio, target audience, and success criteria (photorealism, stylized, social-first).

- Collect references: high-quality images, brand frames, lighting samples, and example clips. Label and crop for consistent framing.

- Pick the right model: photorealistic models for product demos; stylized models for animation; temporal-optimized models for longer shots.

- Run small pilots: 3–8 second clips to validate look and motion. Compare outputs from multiple models.

- Iterate: tweak prompts, replace or refine references, and use inpainting/frame-editing tools for fixups.

- Composite and finalize: export passes if available, add sound, color grade, and deliver for the intended platform.

Tools and Templates Speed Up Production

Templates and curated presets reduce experimentation time. Popular template types include social ad formats, product reveal sequences, cinematic opening shots, and character interactions. One platform that centralizes models, production workflows, and templates is Pollo AI. Pollo AI describes itself as an all-in-one agency and AI video generator that supports text-to-video, image-to-video, reference-to-video, frames-to-video, and more.

Pollo AI provides access to multiple leading models—such as Veo3, Wan AI, and Sora—plus a huge library of popular templates (including trending templates like AI Kissing and many others). Pollo AI’s app and agency-style support help teams choose the right model, run pilots, manage iterations, and deliver final assets without wiring together a custom stack.

Use Cases and Examples

- Marketing personalization: Generate short localized ads by swapping references (actor, background) while keeping brand color and pacing consistent.

- Product demos: Use image-to-video to animate a product photo for a quick feature highlight reel.

- Storyboarding and previsualization: Turn a sequence of keyframes into a rough animatic using frames-to-video.

- Social clips: Rapidly produce stylized content with templates optimized for vertical formats and short durations.

Ethics, Rights, and Quality Control

- Always get consent for likeness and voice use.

- Verify you hold rights to any images, clips, or music used as references or assets.

- Label AI-generated content clearly where required by platform or law.

- Build review steps to catch hallucinations, artifacts, or misleading content before publishing.

Tips to Get the Most Out of AI

- Keep references consistent: similar resolution, lighting, and framing.

- Test multiple models for the same brief—each has distinct strengths.

- Use layered outputs when possible (alphas, foreground/background) for better compositing.

- Start with short clips and scale up; long continuous sequences are still more complex.

- Maintain a reference library and prompt history for reproducibility.

Final Thoughts

AI video generators are maturing rapidly. Text-to-video is great for quick ideation, image- and reference-based workflows provide control and consistency, and frames-to-video helps translate storyboards into motion. Platforms that combine model access, templates, and production support can dramatically shorten the path from concept to polished deliverable.